The Vesuvius Challenge

Are you ready for the latest challenge on Kaggle? All you need to do is use machine learning to resurrect an ancient Roman library from the ashes of a volcano to win $20,000!

Here's how it all started. About two thousand years ago, the father-in-law of Julius Caesar was chilling out in his villa in Herculaneum. This is what his home looked like:

Not too shabby, right? Later, the villa was converted to a vast library containing thousands of papyrus scrolls.

But then mount Vesuvius erupted in 79 AD and covered the entire building in a deep layer of hot mud and volcanic ash. The pages of the papyrus scrolls carbonized and fused together. But in a twist of fate the mud also held them perfectly and prevented them from disintegrating.

In 1750 a farmer in Italy at the site discovered a section of marble while digging a well. The subsequent excavation uncovered statues, frescoes and hundreds of these scrolls.

Unfortunately many attempts to unroll the scrolls led to their destruction. And so historians decided to leave them alone for almost 300 years, waiting for a future era with better tools to analyze them.

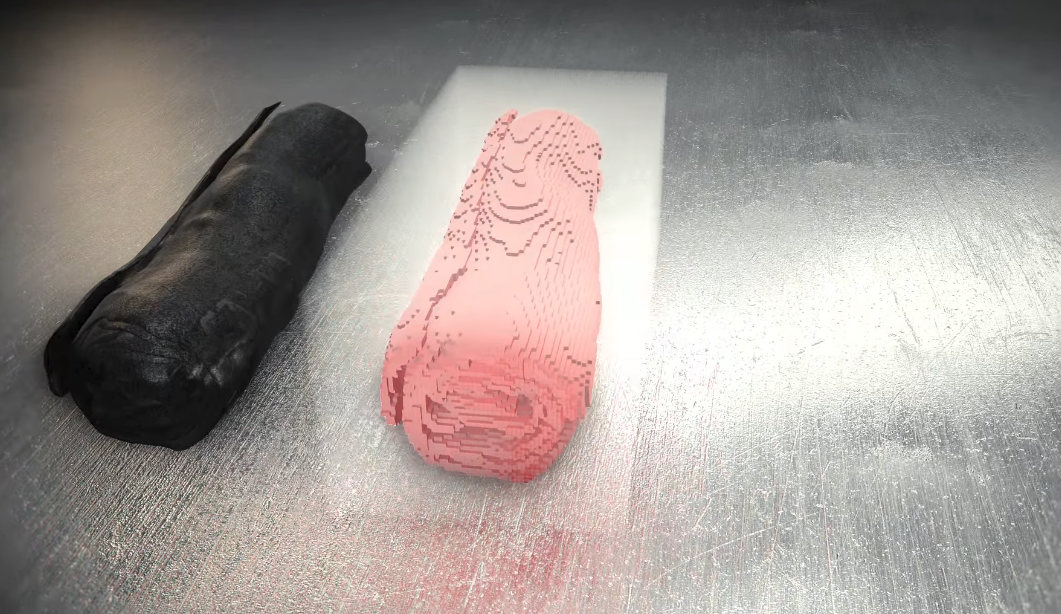

Today the scrolls look like this:

And we finally have the tools to open them.

The Challenge

In 2019, a team led by Dr. Brent Seales at the University of Kentucky used a powerful particle accelerator to generate a high-intensity X-ray beam to scan the scrolls and create a digital copy that we can virtually unroll, without touching or damaging the original scroll.

The ink in the scrolls has a slightly different X-ray radiodensity than the uninked papyrus. By analyzing the digital copy and using the right settings, the ink should show up as light or dark patches. And if we project the 3D image onto a 2D surface (effectively unrolling it), the entire original text should become visible.

Unfortunately there is a snag. The scrolls have been written with carbon-based ink which under X-ray light looks identical to uninked carbonized papyrus. To the human eye, it all looks the same. But machine learning models are able to detect the ink.

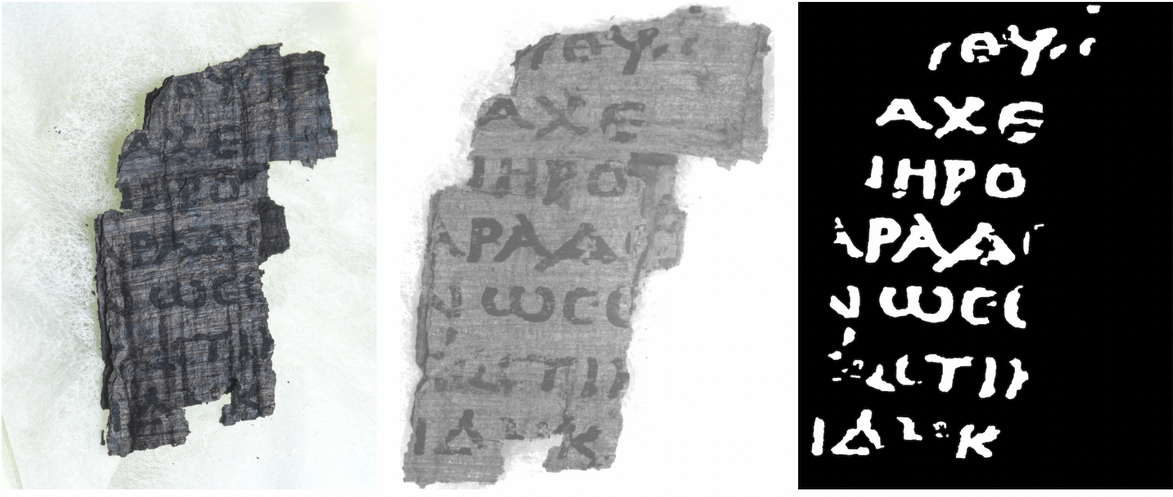

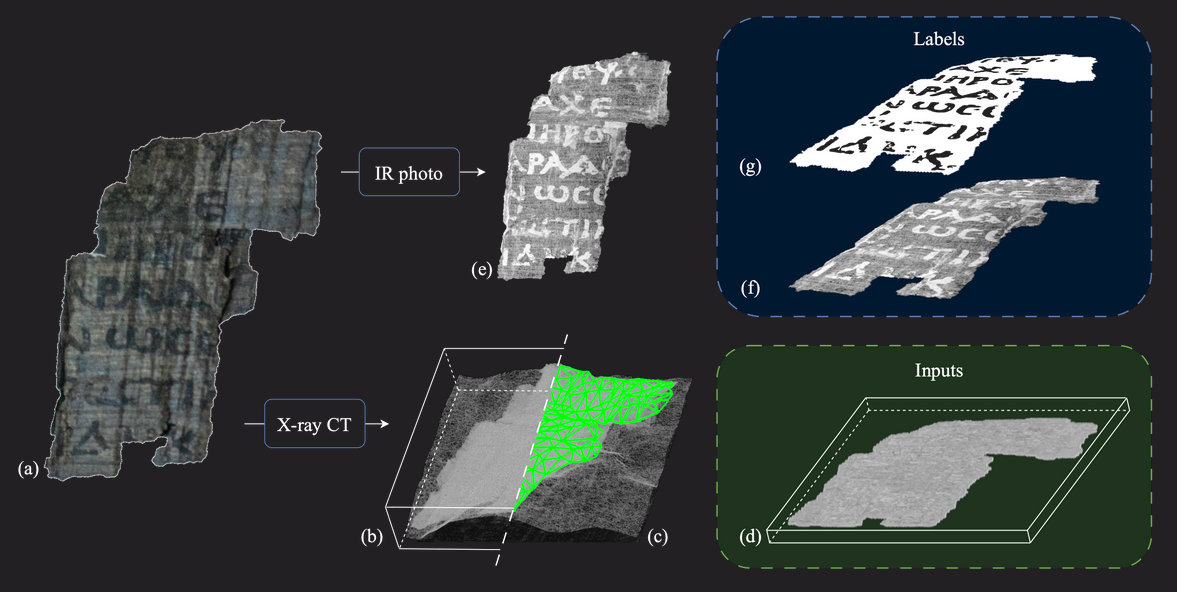

Here's how it works. We have fragments of papyrus that broke off from other scrolls during attempts to open them. On some fragments the ink is still visible, and under infrared (IR) light the text clearly shows up.

We can scan these fragments with X-rays to create a digital copy. We then train a machine learning model on the 3D data and use the pixels in the infrared photo as binary classification labels that specify where the ink is located.

Once the machine learning model is fully trained on the papyrus fragments and produces good quality predictions, we can use the model to analyze the rolled up scroll and generate text predictions for the entire sheet of carbonized papyrus.

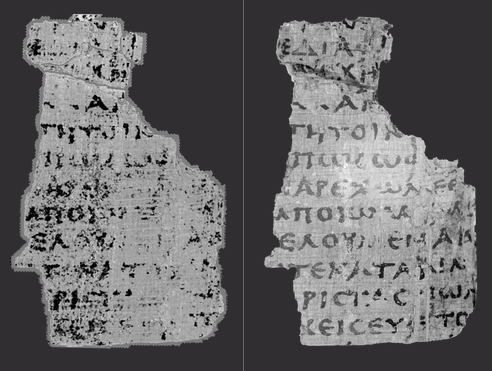

The state of the art in ink detection is Stephen Parson's ink-id program, It's a python app that can detect ink directly from the digital 3D data.

When we run ink-id on one of the fragments, we get this result:

Here's where you come in. Ink-id is doing a great job, but you can do better. Your assignment in this challenge is to create a computer vision model that can analyze the digital copy of the Herculaneum scroll, perform binary classification and predict exactly which image pixels contain ink and which are uninked papyrus.

Given that this challenge involves visual data, you'll probably need a computer vision model. My first choice would be a convolutional neural network with hand-crafted convolution and pooling layers, and a dense classifier at the end.

Here's a python notebook with a simple convolutional neural network to get you started: https://www.kaggle.com/code/jpposma/vesuvius-challenge-ink-detection-tutorial

If you win the Kaggle challenge, you'll receive the $20,000 first prize. There are also $15,000, $10,000 and $5,000 prizes for second, third and fourth place.

You can view and join the challenge here: https://www.kaggle.com/competitions/vesuvius-challenge-ink-detection/

Computer Vision Training

Are you interested in creating your own computer vision models? I have an online training course that will teach you how to build convolutional neural networks using Microsoft's (now discontinued) CNTK machine learning library.

Deep Learning with C# and CNTK

This course will introduce you to Deep Learning and Neural Networks and get you up to speed with Microsoft's Cognitive Toolkit library.

The course will teach you how to build neural networks by stacking neural layers on top of each other. You'll learn how to build a by-the-book convolutional neural network with convolutional and pooling layers and a dense classifier for producing predictions.

In fact, one of the neural networks in the training is probably very similar to what you'll need to complete this Kaggle challenge. It looks like this:

If you want to learn about these kinds of neural networks, then feel free to check out the training. But do keep in mind that the CNTK library is discontinued and no longer supported by Microsoft.